The Skill Gap Returns (Again)

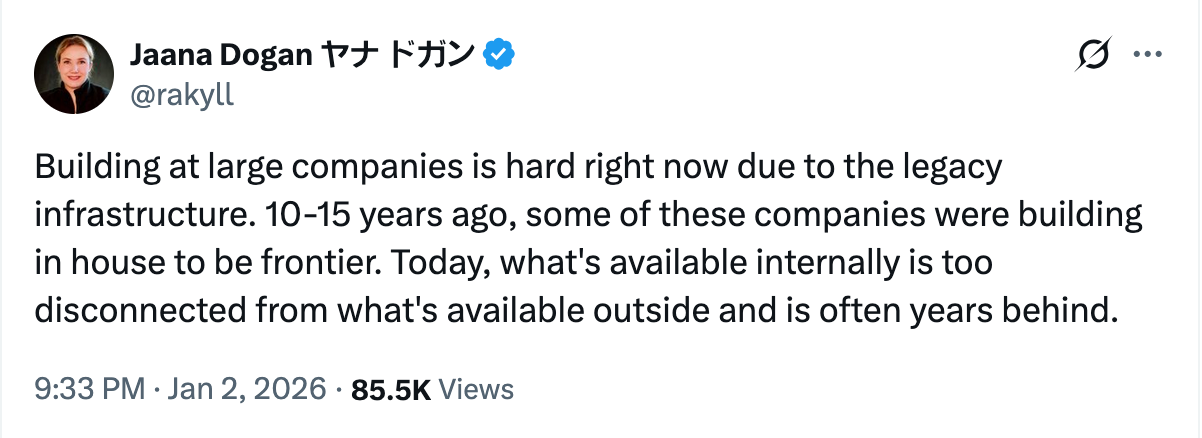

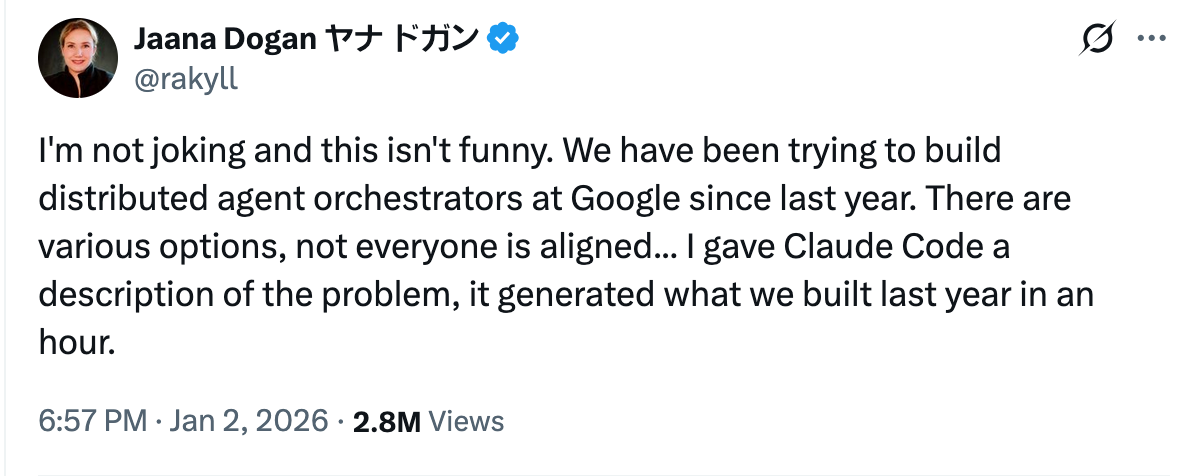

It appears instead as an awkward confession—often from someone who should not, by all appearances, be confessing at all.This week, that confession came from a Principal Engineer at Google.She...

She described how her team had spent the better part of a year attempting to build a distributed agent orchestration system. There were meetings. Alignment sessions. Internal tooling constraints. Legacy infrastructure. Reasonable explanations, all of them. Then, almost as an aside, she noted that she described the same problem to an external AI coding system—and it produced an equivalent solution in about an hour.

The internet reacted as it always does: with outrage, mockery, and misplaced diagnosis. Some declared this evidence of dysfunction at Google. Others framed it as a product comparison—Claude versus Gemini, this tool versus that. A few asked for credential verification, as though the truth of the observation might collapse if the speaker could be sufficiently discredited.

All of this misses the point.

The Real Pattern Is Older Than AI

I have seen this before. Not once, but many times.

I saw it when mobile computing arrived and entire organizations failed to understand the devices they were designing for. Bad workflows produced bad user experiences. Schools did not know how to teach it. Most professionals learned just enough to get by and no more.

I saw it again with cloud computing and network engineering. People skipped fundamentals—Linux, networking, systems thinking—and leapt directly into tooling. They could deploy, but they could not explain what they had deployed. When DevOps arrived, confusion deepened rather than resolved.

Then came crypto. Few were willing to study money before attempting to rebuild financial systems. Fewer still wanted to revisit trust, settlement, or history. Many wanted applications without primitives. The results were predictable.

Each time, the story was the same: a widening gap between those who studied deeply and those who merely adopted the surface forms of the new technology.

Artificial intelligence is not breaking this pattern. It is accelerating it.

Why This Moment Feels Uncomfortable

The discomfort people felt reading that engineer’s post was not about AI replacing programmers. That fear is too crude to explain the reaction.

What unsettled people was something more specific: the realization that a year of human coordination—meetings, approvals, compromises, incentives—could be compressed into a single, uninterrupted act of intent.

The AI did not need consensus. It did not require alignment. It did not need to negotiate priorities or career incentives. It simply executed the task as described.

This is not magic. It is not intelligence in the human sense. It is something far more threatening to existing structures: frictionless execution.

Large organizations are, by design, friction machines. They exist to coordinate many people over long periods of time. This coordination was once their great advantage. Now it is becoming their greatest liability.

Why Google Is Not the Exception

It is tempting to read this episode as a failure unique to Google. That temptation should be resisted.

If an organization with its own data centers, its own hardware accelerators, and some of the strongest engineers in the world feels this drag, then every organization beneath it feels it more acutely.

Most companies are not behind because they lack tools. They are behind because their internal rhythms were shaped for a slower era. Meetings replaced thinking. Process replaced mastery. Busyness replaced study.

AI does not tolerate this substitution.

The Quiet Truth About Adaptation

The real dividing line is not age, intelligence, or access. It is lifestyle.

To adapt meaningfully in this era requires long, uninterrupted periods of study. It requires daily experimentation. It requires the humility to feel ignorant repeatedly and publicly. It requires a willingness to rebuild one’s workflows from first principles rather than layering new tools atop old habits.

Most people cannot do this—not because they are incapable, but because their lives are optimized for something else. Families, meetings, obligations, and incentives were structured for a previous technological rhythm.

There is no shame in this. But there are consequences.

Why Old Institutions Rarely Come Back

History offers little comfort here. Institutions that miss a technological inflection point rarely recover their former dominance. They persist, often profitably, but no longer lead. Their internal logic becomes incompatible with the new reality.

This is not because the people within them are foolish. It is because incentives calcify faster than skills.

Artificial intelligence does not create this phenomenon. It merely removes the remaining ambiguity about it.

A Closing Observation

The significance of this moment is not that an AI wrote code quickly. That will soon be unremarkable.

The significance is that clarity of thought now outruns organizational power.

Those who can sit quietly, study deeply, and execute directly will build faster than those who must first align. This has been true before. It is simply more visible now.

The skill gap has returned—wider, sharper, and less forgiving than ever.

And as always, it will not close gently.