My Path Through AI, XR, and the GPU Edge

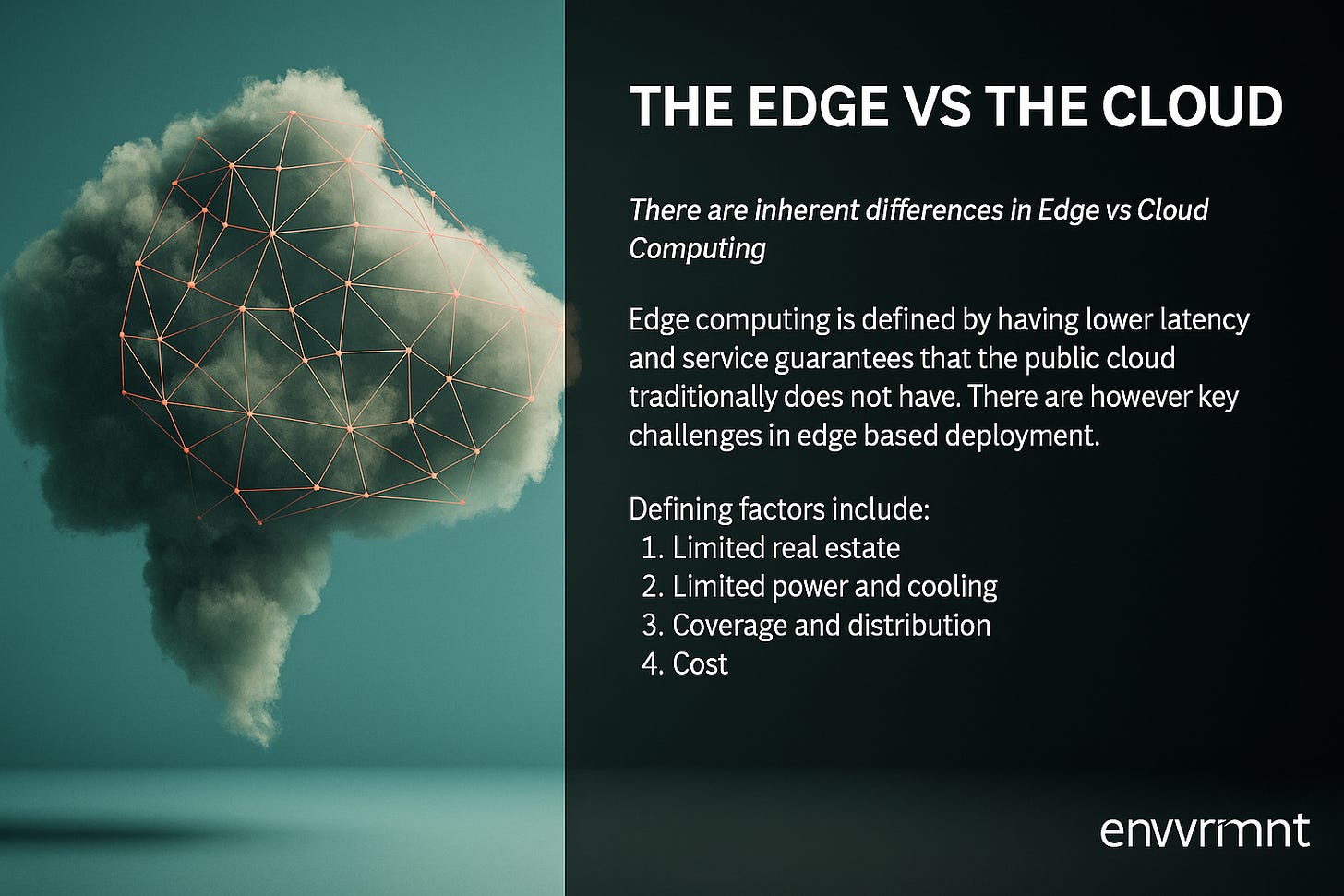

Over the last decade, I’ve lived through—and helped shape—a transformational period where artificial intelligence left the cloud and stepped into the real world.

My Path Through AI, XR, and the GPU Edge

Over the last decade, I’ve lived through—and helped shape—a transformational period where artificial intelligence left the cloud and stepped into the real world. I didn’t just build AI models; I helped give them bodies. From self-driving car stacks to volumetric video over 5G, from container orchestration for XR rendering pipelines to GPU slicing at the edge, I didn’t see AI as “just math”—I saw it as a system. One that needed to be architected, distributed, and deployed.

And it all started not in the lab, but at the edge.

Getting a Toehold: GPU at the Network Perimeter

My early days in AI weren’t spent tuning transformers. I was deep in DevOps and edge infrastructure. When I joined Verizon Labs in 2016, we weren’t talking about generative AI—we were talking about how to deploy computer vision workloads at sub-20ms latency over 5G.

The technical challenges were enormous:

How do you slice GPUs for multi-tenant XR pipelines in dense metro areas?

How do you stream raytraced frames in real-time from edge servers to lightweight devices?

How do you manage orchestration across containerized GPU instances federated across a telco mesh?

I worked on all of that. We built real-time inference stacks for volumetric video using Magnum IO, offloaded lighting calculations for AR experiences to mobile edge compute clusters, and designed a custom hybrid renderer to decouple graphics pipelines from mobile thermal constraints. We weren’t theorizing—this was R&D with production constraints. We were streaming volumetric 3D experiences live at the Super Bowl using NVIDIA RTX cards and AWS Wavelength zones.

Finding My Niche: Distributed AI Compute

By 2019, I’d gone from DevOps Engineer to Architect of the “GPU Edge” stack. I was leading technical implementation on some of the first commercially viable XR/AI platforms in the U.S., architecting a GPU orchestration system capable of:

Volumetric video compression across edge nodes

Real-time spatial audio occlusion using edge DSPs

GPU slicing and federation across Kubernetes-managed clusters

XR rendering APIs embedded directly into Unreal Engine

I led efforts that influenced how the industry came to think about “edge-native” apps—not just offloading to the cloud, but pushing compute into the network.

We weren't optimizing for benchmarks. We were optimizing for reality: battery limits, thermal constraints, jitter, real-time motion alignment, urban handoffs, fog computing. I called it “intelligent edge realism.”

The AI Inflection Point: When Agents Arrived

By 2020, AI had become the lingua franca. But where others saw large models in datacenters, I saw opportunity at the edge. Why should all inference live in the cloud? Why not make agents context-aware, latency-aware, bandwidth-aware?

We began embedding inference capabilities into the very fabric of our edge stack:

Spatially-aware render pipelines with real-time computer vision on edge GPUs

Predictive caching and workload scheduling for AR streaming

Autonomous robotics stacks powered by edge inferencing on Kubernetes

We built real-time feedback loops between cloud and edge using TensorRT, cuDNN, and containerized inference microservices. My team helped build the first multi-layer rendering API stack that could render, infer, and respond across network topology layers.

Building for Resilience: Architecting Monoliths in Motion

I was there when 5G was more hype than reality. I helped make it real. We solved problems that only come from trying to make experiences happen—XR concerts, Super Bowl 360 streams, volumetric live sports.

I worked on custom file formats, transient and chunked GPU pipelines, lightmap and audio occlusion APIs, and intelligent routing protocols that optimized for carrier IP locality over DNS resolution. We invented things because we had to. And when the models finally got big enough—GPT-3 and beyond—I was already thinking about how to run inference pipelines with memory and agency at the edge.

From Pipelines to Products: Memory, Agents, and the Next Layer

Now, in 2025, my work has evolved again. I’m building Monmouth, an AI-native blockchain built for memory-rich, agentic execution. My belief is simple: the next great leap in AI isn’t just about bigger models—it’s about contextual intelligence. Memory-aware, edge-native agents capable of orchestrating real-time transactions.

Everything I learned over the last 10 years—from GPU orchestration to edge compute, from XR optimization to smart routing—is being poured into Monmouth.

I’m not just deploying AI. I’m giving it presence. With memory, with latency awareness, with distributed execution over verifiable compute layers.

Reflections

In 2015, I was working on volumetric compression and hybrid rendering before “AI on the edge” was a buzzword. By 2022, I was orchestrating GPU-based AI pipelines for self-driving cars, XR concerts, and spatial analytics. Today, I’m building the next layer of intelligent compute.

Not many engineers can say they’ve seen the AI evolution from GPUs at the edge all the way to on-chain agents powered by inference memory.

But I can. And I’m just getting started.