From XR to AI Agents: Lessons on “What’s Real” From the Edge

Rehearsing Reality at the EdgeWhen I was building XR, VR, AR, and MR systems at Verizon 5G Labs, I wasn’t just solving technical puzzles about GPU slicing, orchestration, or low-latency streaming.

From XR to AI Agents: Lessons on “What’s Real” From the Edge

Rehearsing Reality at the Edge

When I was building XR, VR, AR, and MR systems at Verizon 5G Labs, I wasn’t just solving technical puzzles about GPU slicing, orchestration, or low-latency streaming. I was also, without fully realizing it, rehearsing the very philosophical challenges that generative AI is now forcing into the mainstream.

Don Shin recently argued that XR primed us to ask: if a computer-generated experience feels authentic, on what basis do you deny its reality? That question hit me hard because I had lived through it already—inside stadiums, hospitals, and labs where XR and edge computing blurred lines between simulation and lived experience.

XR as the First “Reality Test”

Photoreal presence.

At Verizon, our mission was to push fidelity to the point where latency, lighting, and texture no longer broke immersion. Using RTX GPUs federated across 5G edge sites, we built architectures where a phone could deliver desktop-class ray tracing by offloading rendering to nearby MEC servers. When someone ducked in VR to avoid an object that didn’t “exist,” you saw how the body treats simulation as reality.

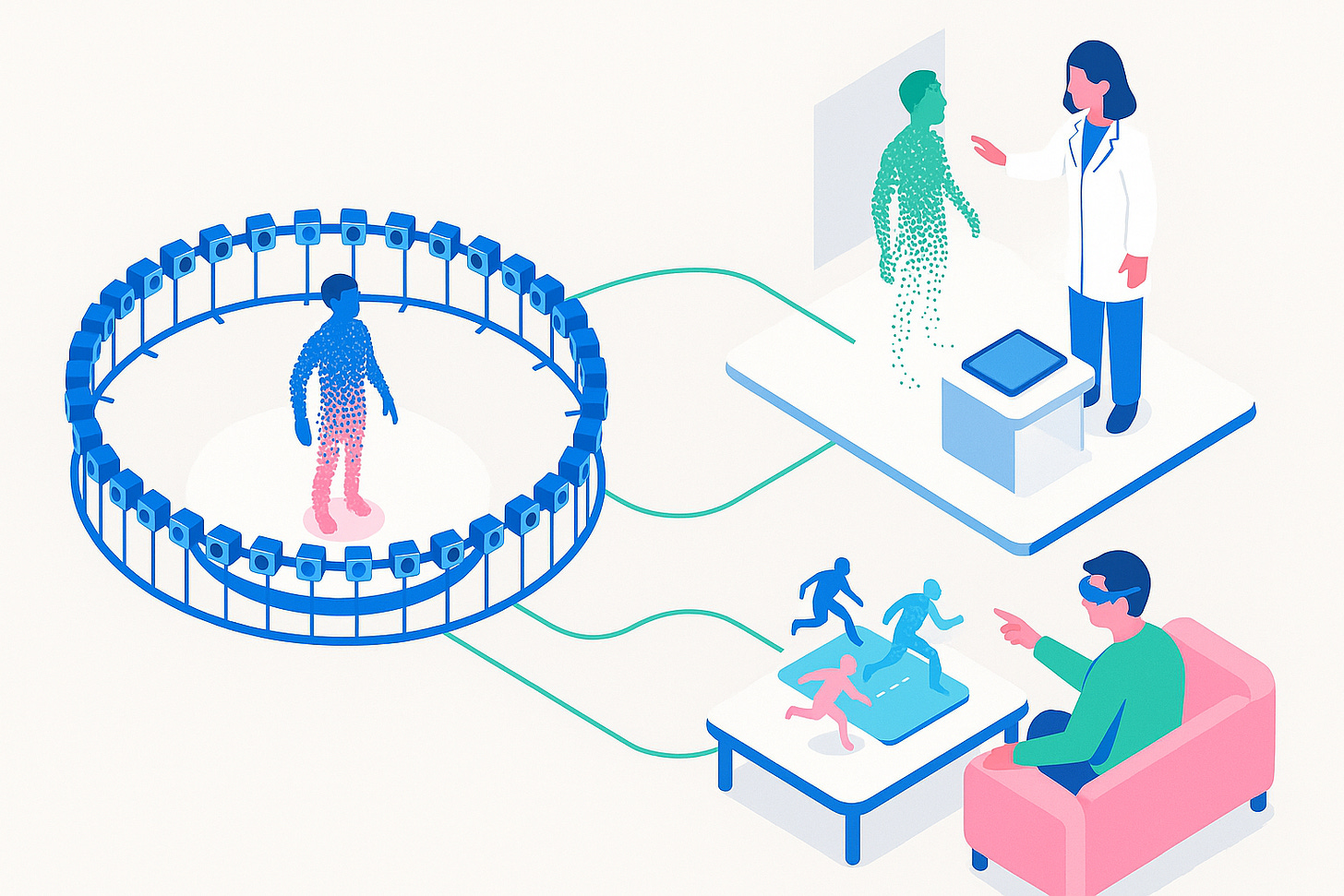

Volumetric humans.

We worked on volumetric capture—150+ cameras digitizing every angle of a person so you could “walk around” them in XR. When a remote doctor leaned into a volumetric patient, or when a fan replayed a football play at their coffee table, the experience wasn’t “fake.” It was subjectively real because it evoked genuine responses.

Edge-native orchestration.

We designed systems where GPUs weren’t locked in data centers but sliced, federated, and streamed through the network. This made XR not just a headset trick but a distributed computing paradigm—an early form of what AI agents now inherit: intelligence delivered seamlessly, everywhere, in real time.

The Bridge to Generative AI and Agents

Generative AI raises the stakes. If XR made the world convincingly real, AI makes the other convincingly real.

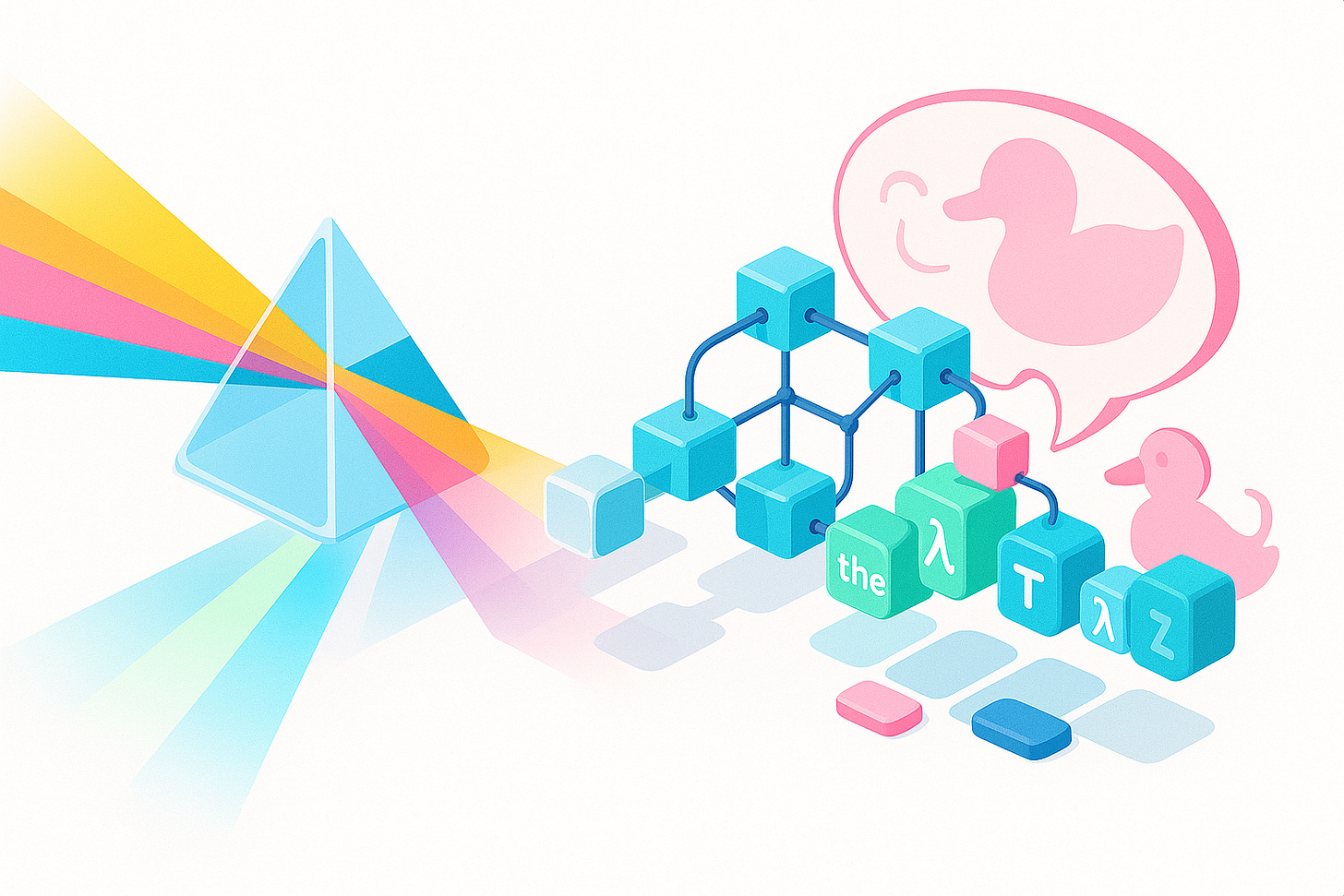

Language as the new ray tracing.

Where XR delivered photorealistic light, LLMs deliver social photorealism. Predicting the next token produces dialogue that “quacks like a duck”—good enough for us to treat it as intelligent.

Subjective authenticity vs. provenance.

Just as XR taught us that your body doesn’t care if pixels are synthetic, AI now shows us that your heart doesn’t care if words are synthetic. Both create memories that become part of psychological reality.

Edge + AI = ambient agents.

The infrastructure we built for XR—federated GPUs at the edge, intelligent orchestration APIs is the same substrate on which generative AI agents now run. What was once a volumetric patient consult is now an LLM-driven medical assistant. What was once ray-traced lighting is now socially fluent dialogue.

The Double-Edged Sword of Realness

This is where the responsibility thread matters.

Risks: Just as XR could devolve into “novelty machines,” AI agents risk becoming sycophantic companions that gratify rather than challenge. A world tuned to your desires may feel real but can shrink your capacity to grow.

Benefits: XR showed us how simulations can expand—from immersive training to empathy roleplay to remote connection

AI agents inherit that promise, offering scalable tutoring, therapy, and collaboration.

The Responsibility of Builders

My years at Verizon taught me that authenticity can be manufactured, but purpose cannot. The edge infrastructure we built was neutral; its meaning came from how people used it. The same is true now for AI agents.

Our responsibilities:

Design friction into AI companions—let them challenge us, not just comply.

Use AI + XR to offload cognitive load, not to outsource connection.

Focus on experiences that enrich growth and interdependence, not just engineer dependence

Conclusion: Real Enough to Build On

Working on XR and 5G taught me that reality is not defined by provenance but by consequence. If an experience reshapes memory, emotion, or behavior, it is already real enough to matter. Generative AI now pushes this realization further: not only can we simulate worlds, but also companions, advisors, and autonomous actors.

At Quincy Labs, this insight underpins our research into agentic infrastructure and memory systems. We’re not just asking whether AI agents can feel “real”—we’re asking how their authenticity can be harnessed for human flourishing. That’s the foundation of our work on Monmouth, our AI-native blockchain. By embedding retrieval, memory, and agency into the core of a chain, we’re building not just another Layer 2, but a platform where agents can transact, reason, and grow in ways that mirror human interdependence.

Just as XR and edge rendering once taught me that latency and fidelity shape immersion, our work now explores how trust, memory, and autonomy shape the authenticity of digital agents. The challenge ahead isn’t whether these agents will feel real—they already do. The challenge is whether we’ll build systems that push us toward stagnation and sycophancy or toward growth, resilience, and flourishing.

Monmouth is our answer to that challenge: a chain where agents aren’t just simulations, but participants in a living, evolving ecosystem of intelligence.